Contributor

What is a Data Lake: A Deep Dive Explanation

Contributor

What You Will Learn in this Article

- You will learn about the various core definitions of the enterprise data lake as defined by the top experts in the field.

- You will be presented with the key use cases and challenges of data lakes for business intelligence teams.

- You will also learn about important considerations to keep in mind when implementing a data lake for an organization.

Why Companies Should Consider a Data Lake?

According to Gartner, companies only use one percent of their data for analytics purposes. This is not because companies choose to ignore the other 99% of their data. Rather, it is because most companies don’t know how to access and process their own data to extract value from it. As mentioned in our article on omnichannel AI, 54% of respondents in an Emarketer survey said their main business roadblock is the inability to analyze and make sense of their data. As a result, companies make many decisions based on a very incomplete picture of reality.

Creating a data lake is a great first step in uncovering new opportunities as well as unforeseen threats. If your organization doesn’t have a data lake at the moment, there has never been a better time to think about creating one. Data is becoming one of the most important strategic assets a company can use to yield growth and attempt to predict something about the future, especially in these highly unpredictable post-Covid-19 times. In fact, poor analytics exploitation might be hiding millions of dollars under your data.

Based on both professional client experience and thorough academic research, we hope this article will provide you with a deeper understanding of what a data lake is and how it can be implemented by your company. Our aim is to offer the professional analytics and IT community with a solid conceptual base and practical advice to help you get started with your data lake project.

From Data Warehousing to Data Lakes

In the early 2000s, as data collection requirements increased, relational databases alone could no longer accommodate the size and diversity of data being stored by many companies and organizations. As a result, the implementation of data warehouses and dimensional analysis models, notably OLAP (Online Analytical Processing) emerged as more suitable frameworks for business intelligence teams.

The multidimensional modelling needs of data warehousing in turn produced functional theories by pioneers like Ralph Kimball and Bill Inmon, which provided solutions for exploiting these new systems from multiple data sources.

This situation further evolved about 10 to 20 years ago, as companies like Google, Facebook, YouTube and Amazon grew exponentially in data size and structural diversity, which meant data management needed radical changes. This need gave rise to frameworks like Hadoop in 2006, along with MapReduce and eventually Apache Spark, and provided better solutions for big data infrastructures. As big data continued to grow and data management problems extended to a wider range of companies and organizations, it gave rise to the next stage in the evolution of file storage systems in the form of data lakes. These ecosystems could accommodate not just structured data, but also semi-structured and unstructured data, also referred to as polymorphic data structures.

What is a Data Lake?

As will be shown later in the article, the main use cases and implementation challenges surrounding the creation of a data lake are becoming well documented. However, there is still confusion over the definition of the data lake itself. First, is there a clear definition of what a data lake is? Unlike data warehouses, the structural definition of which is well established by the work (and debate) of Kimball and Inmon, it is more difficult to reach consensus on the functional definition of a data lake. For the most part, the concept emerged organically from industry needs.

The most common interpretation is probably that of James Dixon, founder and former technical director of Pentaho. Dixon is the one who coined the term “Data Lake” in 2010 and whose definition is among the ones most cited in the industry. According to Dixon, a data lake can be described as follows in relation to a “Data Mart”:

“If you think of a Data Mart as a store of bottled water, cleansed and packaged and structured for easy consumption, the Data Lake is a large body of water in a more natural state. The contents of the Data Lake stream in from a source to fill the lake, and various users of the lake can come to examine, dive in, or take samples.“

A slightly more structured definition was proposed during a debate between Tamara Dull and Anne Buff, most of which is available on the popular analytics website Kdnuggets.com. The definition used during the debate was as follows:

“A data lake is a storage repository that holds a vast amount of raw data in its native format, including structured, semi-structured, and unstructured data. The data structure and requirements are not defined until the data is needed.“

In the scientific literature, an interpretation of the data lake was offered in a paper by Snezhana Sulova, of the University of Economics – Varna (Dept. of Informatics,) who describes it more as being a data storage strategy. Another pair of Ph.D researchers, Cédrine Madera and Anne Laurent, also contributed an elaborate technical definition of the data lake as a new stage in the evolution of information architecture for decision support systems. The Madera and Laurent paper meticulously reviews a large number of fundamental properties associated with data lakes. However, because their definition was written in the earlier stages of the Hadoop and MapReduce frameworks, the core data processing method they associate with data lakes is strictly limited to batch loading techniques. This is now obviously erroneous, as it doesn’t take into account more recent advances in real-time streaming, such as with Apache Spark, Kafka, Google’s Pub/Sub or Apache Beam. The latter frameworks have become a fundamental component in the evolution of data lakes in the last few years. Real-time streaming aided in further exploding the size of data collection, namely by simplifying the integration of sensor data from IoT (Internet of Things) devices.

The Concept of ‘Data Gravity’

Another distinguishing characteristic of data lakes is actually a bit more esoteric, and deals with a novel theory called data gravity. The idea was developed by Dave McCrory in 2010 and has since made its way into professional and academic literature, as well as becoming the topic of talks and panel discussions in various IT conferences. McCrory even developed a mathematical formula to support his theory. In essence, data gravity maintains that data has mass and by extension, a gravitational pull of its own. According to McCrory, “This attraction (gravitational force) is caused by the need for services and applications to have higher bandwidth and/or lower latency access to the data.” In a data lake, the concept of data gravity is often discussed in consideration of the difficulties of moving data after it reaches a certain “critical mass.”

The Role of Lakes in the Analytics Ecosystem

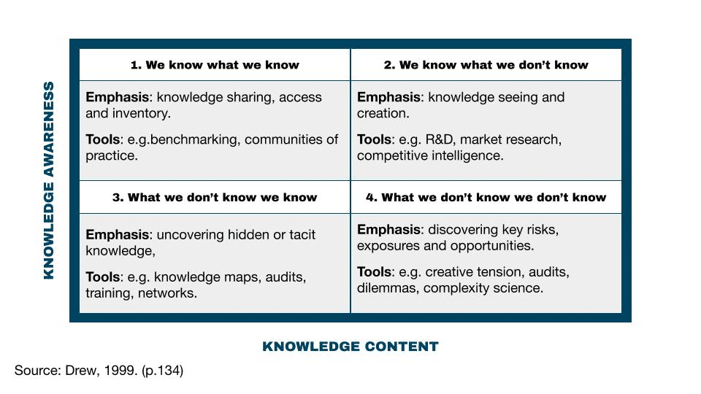

All of those explanations are helpful in better framing the role of the data lake in business and marketing intelligence environments. The idea would be to create a data mining pool without the rigid and sometimes restrictive rules of warehouse schemas. Based on this definition, it could be interesting to classify the role of the data lake according to Stephen Drew’s knowledge map:

Based on this model, the data lake can cover all quadrants of the knowledge map of an organization, with its highest value and focus probably being in quadrant no. 4: “What we don’t know we don’t know.” Indeed, this is what big data in its raw state has the potential to bring to the fore within a company. This is why these lakes are very often exploited on an exploratory basis by data scientists, whose objective is the discovery of new data patterns, which can give rise to new business or marketing solutions, and more importantly, identify new questions, thereby yielding strategic value for a business or marketing intelligence team.

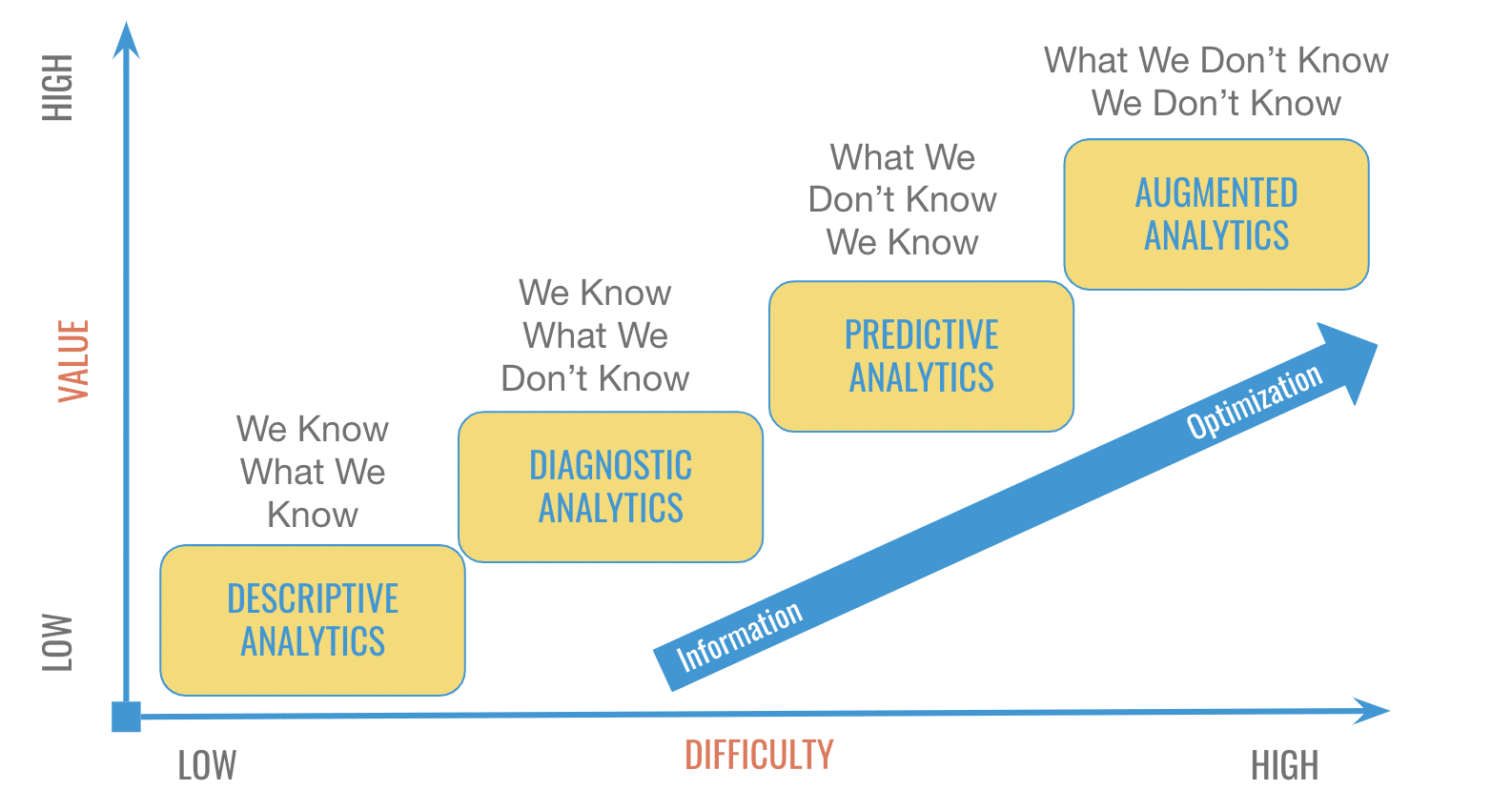

If a company doesn’t have a data lake or warehouse, deciding which one to build first in your analytics ecosystem will depend on many factors, namely the speed at which your organization can reach its analytics maturity. To learn more about the difference between data lakes and data warehouses, you can read our article on the subject: The Difference Between Data Lakes and Data Warehouses.

Typically, an organization will start in the early stages by mastering descriptive and diagnostic analytics data, which can provide a clear picture of performance. Those needs are best met with a solid data warehouse implementation. As the analytics practice matures, the company can gradually move toward predictive analytics and ultimately, prepare itself for augmented analytics (i.e. natural language processing and artificial intelligence.) Those needs are best met by the implementation of a data lake environment. Each stage of maturity brings incremental value to the business. However, each stage also comes with increasing levels of complexity and technical expertise. As such, it might be wise to crawl or at least to try to walk before you run toward a more advanced analytics ecosystem.

The Do’s and Don’ts of Data Lakes

If you have been considering the creation of a data lake for your organization, here is a short list of the major Do’s and Don’ts to keep in mind when planning your project:

The Do’s

- Outline the key business questions and the main objectives you want to achieve with a data lake, more specifically the things you currently aren’t able to achieve with your existing data warehouse or databases;

- Define important analytics use cases you will need to fulfill and assess the business value and impact of those use cases before moving forward;

- Assess the range of data sources you will need to integrate into a data lake environment and map a preliminary metadata catalog.

The Don’ts

- Don’t try to implement your data lake without first testing a proof of concept, like building a “mini lake” or pond for a specific department and use case;

- Don’t get blinded by the charming promises of tech vendors and cloud providers who will promise you a data lake within only a few clicks of a button;

- Don’t keep the data lake project exclusively in the hands of IT or BI or Marketing or any one specific team. A data lake project needs to be a cross-functional and especially a cross-team effort!

With now a clearer understanding and definition of what a data lake is and its role in the analytics ecosystem, the next important step is to understand what the main use cases and challenges are with its implementation.

Core Use Cases and Challenges

In light of the lack of formal empirical studies on the subject of data lakes, Marilex Rea Llave, Assistant Professor at the University of Agder (UiA,) published an interesting article in 2018, in which she presents a view from the field about why and how data lakes are being used by business intelligence practitioners. Her approach consisted of an analysis of the interviews she conducted with a group of 12 business intelligence experts from Norway. These experts came from various industries to try to understand the contribution of data lakes in different environments and business realities. The article provides interesting insights into the following question: What are the main use cases and implementation challenges of data lakes?

Based on the interviews conducted by Llave, there are three main use cases for data lakes by business intelligence experts:

- Used as staging areas or sources for data warehouses

- Used as experimental platforms for data scientists or analysts

- Used as a direct source of self-service BI to access atypical data

Her analysis also identified five major challenges associated with the implementation of these lakes, including:

- Data Stewardship. Who is responsible for the lake and who maintains it within the organization?

- Data governance. How can we ensure the governance of a collection of data that is both massive and very disparate?

- Professional Talent. What type of hiring profile should be considered to manage the infrastructure of a lake?

- Data quality. How can we manage the quality of the data entering the lakes given its lack of organization compared to warehouses?

- Data recovery. How do you extract data from a lake to extract analytical value? (e.g. IoT sensor data)

All of these challenges are important to consider when designing the implementation of a data lake within an organization. Other authors have lists similar to that of Llave, notably in an article published by Miloslavskaya and Tolstoy7, in which the authors outline twelve key factors to consider during an implementation. If you are interested in learning more about those twelve factors, you may look up their paper in the references below at the end of this article.

A Data Lake Design Framework

With that being said, while key factors and challenges have been well outlined by the above quoted academicians, it still doesn’t give companies a clear standard framework with which to tackle those challenges. Moreover, contrary to data warehouses, there still isn’t a clear cut blueprint for data lake design. The best effort yet is probably found in a 2019 book written by Alex Gorelik called ‘The Enterprise Big Data Lake: Delivering the Promise of Big Data and Data Science’. The book only has 224 pages, but provides a solid foundation for IT and Data Engineers to work from. However, it isn’t exactly a framework in the strictest sense of the word.

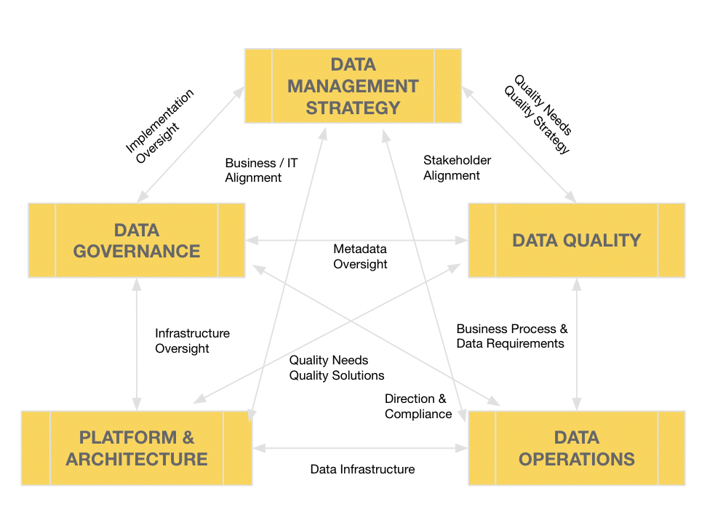

For a fuller process to guide your data lake project, a very suitable companion to Gorelix’s book are the six key themes of CMMI’s Data Management Maturity (DMM) model. This framework provides a Fortune 500 tested (and even NASA tested) methodology for IT and data management teams to work from when planning a large scale project like a data lake. Here is what the relationship between the six data management themes of the DMM model look like:

The Data Lake Architecture: In the Cloud or On-Premise?

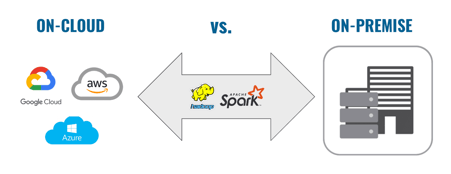

Once the business objectives and use cases have been identified, along with the IT management framework (i.e. DMM), the time has come to consider what kind of architecture will be deployed for the lake. This part is very important and critical to the success of the project.

Among the key decisions to be made is the question of going serverless using the cloud or keeping everything on premise in large data centres. Typically, organizations with high security considerations will favour on-premise, while more agile and nimble companies will prefer the cloud. A third option is going hybrid and mixing up the two, in order to get the best of both worlds.

Cloud services are hugely popular these days and tend to suit many use cases and workloads. This is likely why Deloitte reports that one-third (34%) of companies claim to have already fully implemented data modernization in the cloud. Another 50% of companies say they are currently in the process of modernizing their data management.

The question is, how can you make the right choice when modernizing your infrastructure or deploying new data services? And how can you make sure your data is secure? What needs to be remembered is that each path has its own pros and cons. There is no magic bullet. Moreover, it is misleading to believe that going cloud means choosing a simple option. The cloud may certainly be simpler than implementing on-premise, yet it doesn’t mean it is simple, per se. In fact, 47% of cloud professionals think complexity is the primary risk to ROI (Deloitte 2019.) Therefore, conducting a functional analysis of your core use cases, either with your own IT team or with an external consultant, will save you many headaches down the road. Data lake architectures can be very challenging to maintain and a major risk is to turn your lake into a data swamp.

Moreover, if you do choose to go with a cloud option, as most companies will, it is important to evaluate the nature and importance of the more prominent data sources in the company. This is because you might find some cloud vendors to be more suited for certain types of data. For example, if your company is deeply invested in a Microsoft ecosystem, Azure may or may not be a better option for you. On the other hand, if your company has a strong digital marketing footprint, with lots of data coming through Google Analytics, perhaps Google Cloud might be a better fit.

Another question is, how many of your data sources already have pre-existing API connections you can leverage? How many sources will need a custom API solution? Which one of the cloud providers you are considering has a partner integration with most of the data sources or platforms used by your company? All those questions can be answered through a well planned functional analysis of your use cases and business objectives.

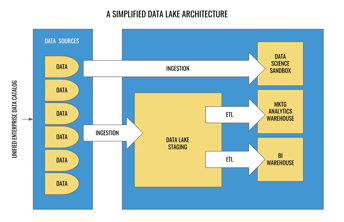

In terms of architecture, the plans vary tremendously from one organization to another. However, a simplified version of just about any data lake architecture should include the following components:

- A unified data catalog comprising of all sources;

- A staging area where data will be ingested for treatment before moving onto an ETL job or other transformations;

- A data science sandbox for raw exploration that will usually bypass the staging area;

- A dedicated data warehouse(s) with ETL jobs for business intelligence and/or marketing analytics reporting.

We hope this deep dive article was helpful to you and gives you a better understanding of what a data lake is and what it can offer to your organization. If you’re interested in learning more about the subject or if you need help to get started with your project, don’t hesitate to reach out to our Analytics and Data Science team. In the meantime, you can go even further in your research by consulting all the bibliographic references to this article found below.

References

- ‘A Brief History of Data Lakes – DATAVERSITY’, available at https://www.dataversity.net/brief-history-data-lakes/, last accessed on Feb. 24, 2020.

- ‘Charting the data lake: Using the data models with schema-on-read and schema-on-write’, available at https://www.ibmbigdatahub.com/blog/charting-data-lake-using-data-models-schema-read-and-schema-write, last accessed on Feb. 24, 2020.

- ‘Data Gravity – in the Clouds, available at https://datagravitas.com/2010/12/07/data-gravity-in-the-clouds/, last accessed on Feb. 28, 2020.

- ‘Data Lake vs Data Warehouse: Key differences’, available at https://www.kdnuggets.com/2015/09/data-lake-vs-data-warehouse-key-differences.html, last accessed on Feb. 28, 2020.

- ‘Data Warehouse Design – Inmon versus Kimball – TDAN.com’ available at https://tdan.com/data-warehouse-design-inmon-versus-kimball/20300, last accessed on Feb. 27, 2020.

- Ahmed E., Yaqoob I., Abaker Targio Hashem I., Khan I., Abdalla Ahmed A.I., Imran M., Vasilakos A.V., 2017, ‘The role of big data analytics in Internet of Things’, Computer Networks, Volume 129, Part 2, p. 459-471.

- Dean J., Ghemawat S., 2004, ‘MapReduce: Simplified Data Processing on Large Clusters’, OSDI’04: Sixth Symposium on Operating System Design and Implementation, p. 137–150.

- Drew S., 1999, ‘Building Knowledge Management into Strategy: Making Sense of a New Perspective’, Long Range Planning, 32 (1), p.130-136.

- Khine P.P., Wang Z.S., 2018 [cited 2020 Feb. 19], ‘Data lake: a new ideology in big data era’, ITM Web Conf [Internet], ;17,03025.

- Kimball R. The data warehouse toolkit: practical techniques for building dimensional data warehouses. USA: John Wiley & Sons, Inc.; 1996.

- Llave M.R., 2018, ‘Data lakes in business intelligence: reporting from the trenches’, Procedia Computer Science, Volume 138, p. 516-524.

- Madera C., Laurent A., 2019, ‘The Next Information Architecture Evolution: The Data Lake Wave’, MEDES: Management of Digital EcoSystems, HAL Id: lirmm-01399005.

- Miloslavskaya N., Tolstoy A., 2016, ‘Big Data, Fast Data and Data Lake Concepts’, Procedia Computer Science, Volume 88, p. 300-305.

- Shvachko K. Kuang H., Radia S., Chansler R., 2010, ‘The Hadoop Distributed File System’, IEEE 26th Symposium on Mass Storage Systems and Technologies (MSST), p. 1–10.

- Sulova S., 2019, ‘The Usage of Data Lake for Business Intelligence Data Analysis’, Conferences of the Department Informatics, Publishing house Science and Economics Varna, Issue 1, p. 135-144.

- Walker C., Alrehamy H., 2015, ‘Personal Data Lake with Data Gravity Pull’. IEEE Fifth International Conference on Big Data and Cloud Computing, p. 160–167.

- Yessad L, Labiod A. Comparative study of data warehouses modeling approaches: Inmon, Kimball and Data Vault. In: 2016 International Conference on System Reliability and Science (ICSRS). 2016. p. 95–9.

%20(1).jpg)