Content Marketing Strategist

Is it possible to predict a page’s Google ranking?

Content Marketing Strategist

Around 2018, through François Goube, I discovered the founders of Data SEO Labs, Rémi Bacha et Vincent Terrasi. Their mission is simple but ambitious. To fight, using a mix of SEO and data science, the all-powerful RankBrain, the first Google algorithm capable of understanding a user’s search intention and displaying personalized results in real-time. To complete their mission, they decided to try to predict, as accurately as possible, how likely a page is to be displayed on the first SERP on Google. Thanks to the magic of data science, they currently have a success rate of up to 90%.

SEO in 2020

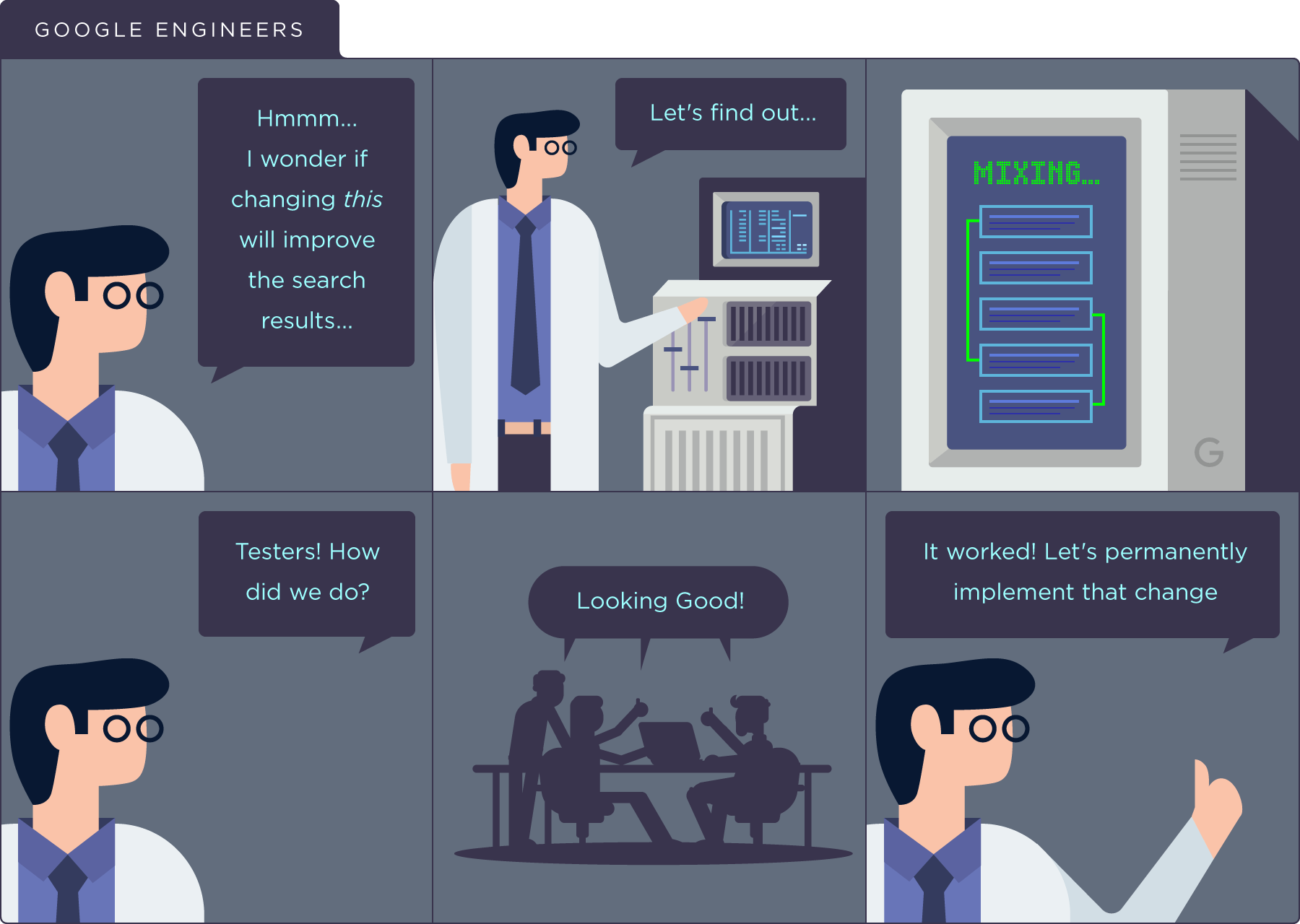

With the arrival of RankBrain in 2015, Google changed the way its search engine worked. Before, indexing criteria were developed, tested and analyzed by humans. According to Backlinko (and its amazing guide to RankBrain), this looked like this:

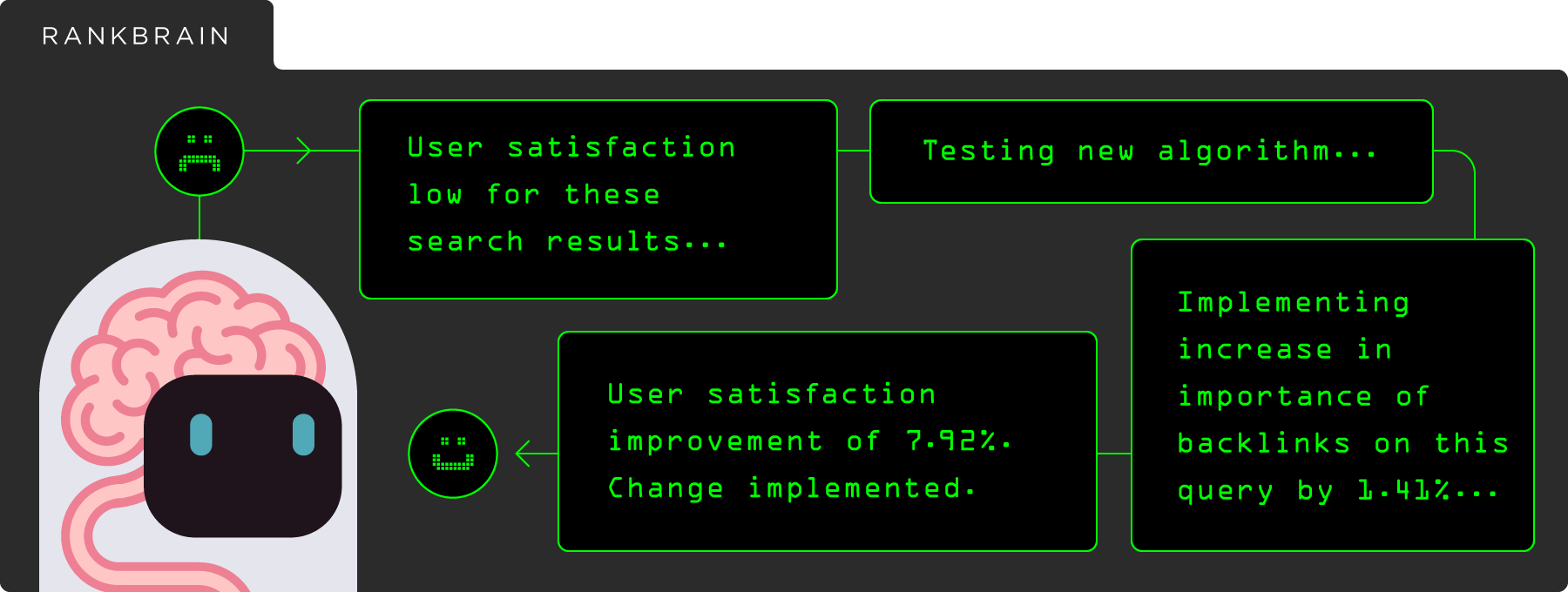

Although human intervention is still necessary, as states the famous Quality Raters Guidelines used by Quality Raters, charged with evaluating the relevance of certain search results (or improvement tests), that’s less and less true. The main thing is, the algorithm’s function changes depending on the query and adapts based on the user. Dozens of micro-changes (or adjustments) are made throughout the year without us even realizing it:

RankBrain is upending SEO practices:

- Changing known factors and their importance (quality versus quantity of linkbuilding terms, for example)

- The emergence of new factors (EAT and mobile-first indexing in 2018)

- Indexing errors, like the one that affected Search Engine Land at the end of 2018

- Ultra-personalization of search results based on the user’s search history or location

- Ranking factors adapted to the query or industry

This is particularly true with the arrival of BERT at the end of October, currently available only in English, that allows you to understand Internet users’ natural language. The goal of BERT is to better understand the relationships between the words in a sentence, rather than treating words and expressions one by one. It’s Google’s way of trying to determine the exact meaning of queries that can sometimes be very specific, where the use of certain linking words can drastically change the meaning of the search, for example when a negation is used.

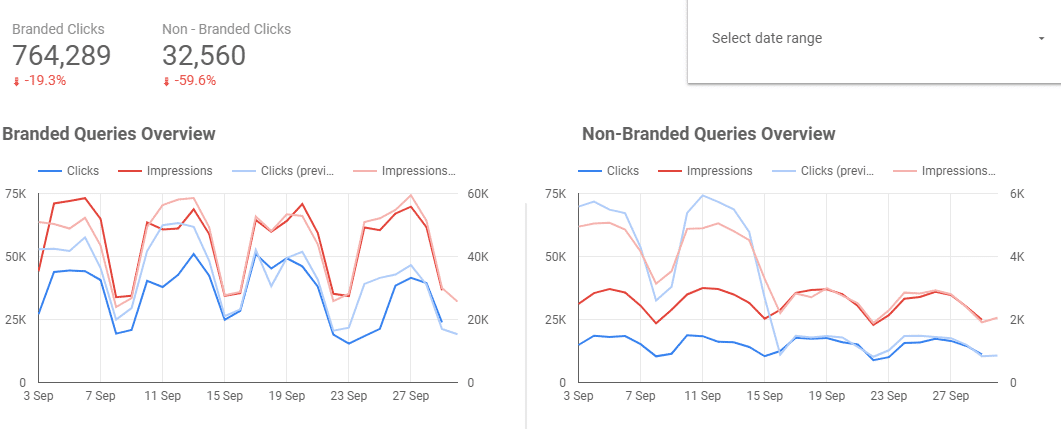

It’s becoming more and more difficult and tedious to get a clear overview of how a site is faring in terms of health and SEO ranking. Veterans still remember the days when Google Analytics stopped showing queries, replacing search terms with “not provided.” As for me, I experienced sheer panic when Google decided to stop showing anonymous queries in Google Search Console in August 2018. It still traumatizes me, and you can imagine what was going on in my head when a client asked me to look into why they were getting these results:

The traffic was still there, but the data had been pulled from the Search Console…

Over the past few years, we’ve also seen an explosion in the tools you need to get SEO work done properly, given how increasingly tight-fisted Google is becoming with information. Link analysis, semantic analysis, performance analysis, log analysis, keyword research, topic research for high-performing content, ranking analysis, analysis of the competitive landscape, crawler… SEO agencies use an average of 10 to 20 different tools to do their work. It is now highly recommended that you automate tasks that can take days (even weeks) to complete, and find a way to ensure that all these different tools speak to each other. Even more so in the era of Big Data and the 3Vs: Volume, Velocity and Variety.

Understanding the present and predicting the future

During my training with Rémi and Vincent, I heard an interesting anecdote. A survey was conducted at an SEO Camp, where three levels of SEO specialists were questioned: junior, mid-level and senior. They were asked about the chances of a particular page making it onto the first SERP for a particular query. Approximately a quarter of juniors got the right answer, a third of mid-level specialists and 40% of seniors. The conclusion? A coin toss would have a greater chance of correctly predicting a page’s ranking than an SEO specialist.

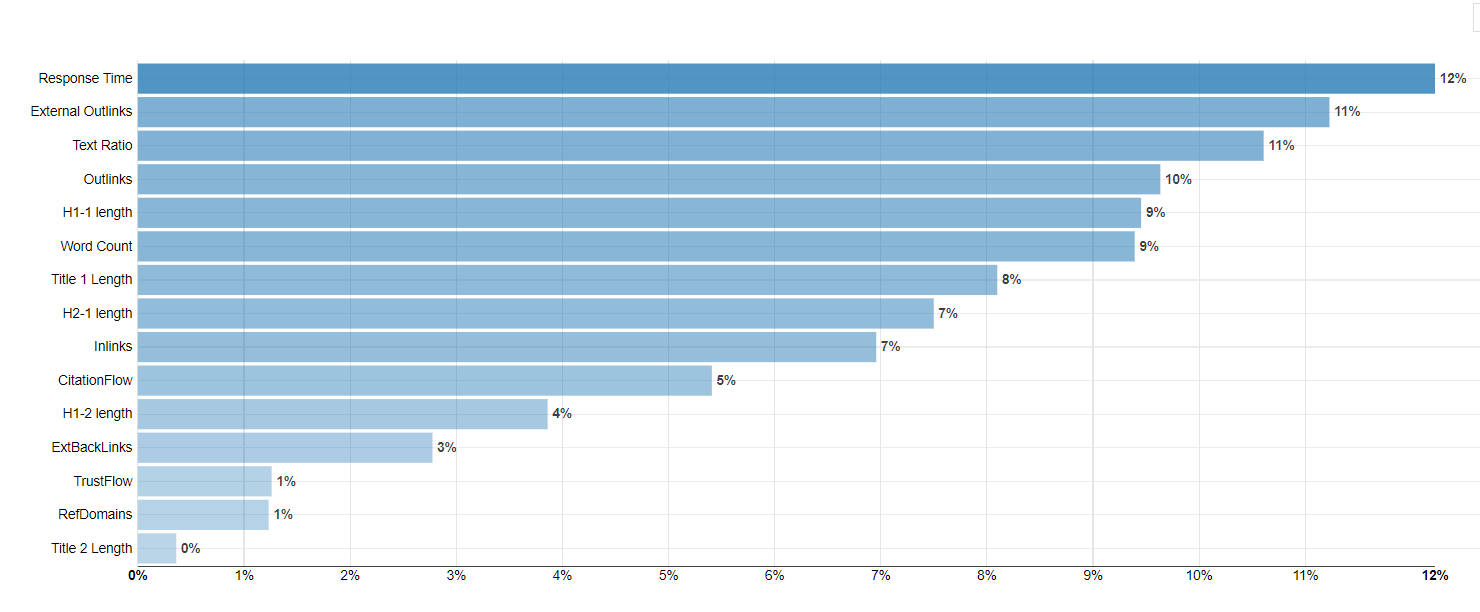

Though the debate rages in the world of SEO as to the importance and weight of various ranking factors, there is a consensus about which are the most important. So, all you’d have to do is find a methodology that would allow you to measure the weight of these factors, and the variable importance they have industry by industry, all based on a volume of pages not analyzable by a human. To summarize, rather than counting solely on the empiricism of an SEO specialist, using data science, reverse engineering and machine learning would go a long way toward helping a specialist correctly predict a page’s ranking.

This methodology exists and is based on the use of Dataiku. Rémi and Vincent have built a semi-automated process that allows them, for example, to analyze the following elements for any given site:

- 100 first search results for X queries (10,000, 20,000…)

- Crawl of the 100 pages turned up by these queries

- Retrieval of the backlink profile for the X URLs analyzed

Once it’s all been retrieved and gathered into gigantic file thousands of lines long, it’s “enough” to the screen it using (also automated) several calculation methods (random forest, linear regression, XGBoost). Each of these has a different success rate depending on the SEO criteria analyzed and questions asked (for example: what ranking criteria matter most for this industry? or: does my page, in pre-production, have a chance of ranking on the first SERP for a query once it’s online?). This methodology has a success rate as high as 90%, meaning it functions very similarly to the Google algorithm, and allows you to make enlightened SEO decisions based on an analysis of a large volume of keywords. Any criteria used by Google is thus potentially analyzable and measurable from the moment it’s crawlable:

- Site speed

- Number and quality of inbound links

- Text ratio

- Freshness of content

- Presence of the keyword in the different tags and content

- Position in the site architecture

- Etc.

Quickly then, it’s, therefore, possible to know with accuracy which criteria should be attacked first to improve the ranking of a site in a particular industry. Like here:

As I watched Rémi and Vincent present their methodology at the conference nearly two years ago, I was skeptical but fascinated by the precision with which they were able to “understand” RankBrain. Today I am just as fascinated by the potential of this methodology, but also convinced that it’s the right path to follow: understanding the present to predict the future.