Digital Media Consultant

Receptivity: the new programmatic performance lever

Digital Media Consultant

Studies have shown that up to 93% of online ads are either not seen or ignored and that approximately 44% of digital ad spend is wasted on ads that are never seen by users. One of the main reasons for this is the democratization of mobile devices, a shift which has drastically changed our media consumption habits in recent decades. Our brains process information more and more rapidly and the multitude of screens that we’re exposed to has resulted in a drop in audience receptivity and, as a result, wasted ad investments. In this article, you will learn how a brand new technology developed in Montreal has been used by our experts to help our clients reduce ad waste and increase ROI.

Introducing, the technology

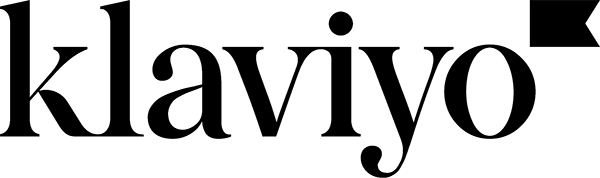

At the outset of 2019, we approached CONTXTFUL, a Montreal tech start-up with a new mobile solution. Their innovative technology makes it possible to analyze the human context and environment behind a user’s device while they are navigating the web. This is made possible by collecting and analyzing multiple non-private data points captured by sensors in our mobile devices. For the sake of “privacy by design” the technology guarantees the anonymity of the user, according to GDPR, IAB and CCPA standards.

A user’s receptivity is calculated by looking at several variables: their level of concentration, level of movement, posture and whether or not their device is being held in their hands.

It strikes us that this technology presents an interesting opportunity to combat ad waste among unreceptive audiences.

Our media team were among the very first users of this technology. Below, we will explain in detail how we worked with our clients to get the most out of their programmatic buys.

Practical application

In collaboration with several of our clients, we ran tests to determine whether there was a strong correlation between CONTXTFUL’s receptivity score and the performance of mobile programmatic media buys.

For the purposes of the exercise, we are going to walk you through the practical application of one of the tests in question. It was run during a new-customer acquisition campaign where our goal was to generate a maximum number of qualified sessions for an e-commerce site.

1. Analysis of the average level of audience receptivity

First, the execution consisted of measuring the average receptivity score of users exposed to the programmatic media campaign. This score was relatively high, but on its own, that finding, though important, wasn’t enough for us to act on. We needed to pursue the experiment further to demonstrate the real value this score offers our clients, and how it would allow them to optimize their media buys and limit their ad waste.

2. Analysis of receptivity broken down by the sites and apps our audiences visited

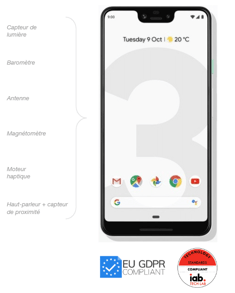

We then started to analyze our audience’s average receptivity score across the spectrum of sites and apps where we were making our programmatic buys.

This allowed us to rank content publishers based on the score obtained on their properties. Using this ranking, we were able to build an “exclusion list” of the sites and applications that recorded a low level of audience receptivity, in order to maximize our investment on properties where average audience receptivity was high.

Does focusing our investments on properties with more receptive audiences allow us to limit ad waste and increase performance metrics for programmatic buys? That’s exactly the question we were aiming to answer when we set up an A/B test splitting our target audience in two.

3. Activation and results

The first half of the audience, the control group, was targeted across the range of inventory available in our DSP. The second half, the test group, was targeted only for inventory with high receptivity rates (excluding the sites and applications identified in the exclusion list described above).

After several weeks of testing, a strong statistical correlation was established between the receptivity rates provided by CONTXTFUL when the ads were published and the retrospective activity on our client’s site. We saw that the higher the receptivity to ad impressions, the higher the campaign performance metrics were.

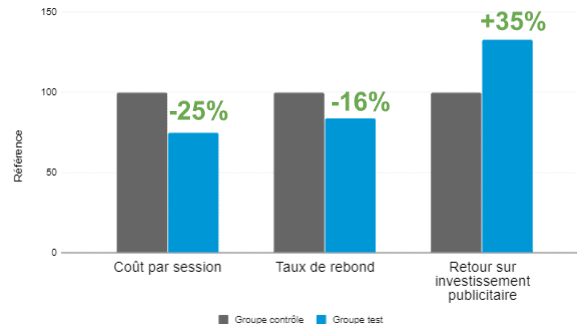

A high mobile receptivity rate increases the acquisition rate for new qualified users and customers. The results demonstrate that the test group (the one exposed to inventory excluding the sites and apps with low receptivity rates) had an average cost per session that was 25% lower than that of the control group (the one exposed to the full range of inventory, no exclusions). From a qualitative standpoint, the sessions from the test group are of a far higher quality, as demonstrated by a bounce rate 16% lower than that of the control group.

In addition to achieving the primary goal of the campaign, the test group generated a return on ad investment that was 35% higher than the control group.

After repeating the exercise for several clients, we were also able to remark that Quebec news media has an inventory with very receptive users, unlike other properties whose inventory is saturated with advertising noise.

To conclude, using this technology in our programmatic media campaigns opens the door to a whole new dimension in terms of mobile programmatic optimization. In a multi-platform context where users’ attention is constantly divided, we can now go beyond quality metrics like, for example, viewability. We can reduce ad waste while ensuring that our ads are being served to an audience that not only sees them, but is receptive to the message.